Caulking

Planks of wood however tightly fitted together on a boat will leak. They need caulking. String or hemp is forced between the planks and then sealed with tar to make the boat water proof. Without caulking a timber boat leaks and you have to bail out the water. How leaky are your projects when they launch? Or are you happy to continually bail out, or let your client bail out, while you move onto the next project?

Caulking websites

Through a project life cycle, be it a brand new site or a redesign, it is wise to consider the pre-launch preparation activities and post-launch maintenance activities outside the normal design, development and content considerations.

There is normally some kind of approval sequence required to launch a site – at a minimum an approval from the client that they are happy for the site to go live – but potentially there will also be approvals from legal, editorial, etc, each looking at a different aspect of the site to see if there is any risk in letting it go live.

You need to think about the amount of caulking you may need to do to help achieve these approvals: how much you budgeted for (time, resource and cost); how often the client is going to come back to you when they spot issues; how much the quality of the work means to your reputation.

Good caulking at the end of a project is vital, but if you factor it in too late then you will be bailing out. A lot.

As your responsibilities will involve some element of ensuring the site is technically sound, you will be looking for risks to the site – there are potentially many ways to sink the boat, so you need to consider potential consequences at each stage.

Pre-launch preparations

Let’s start with code.

HTML

There are some fundamentals you can do easily. Make it w3c compliant – validate it. I know this is no longer fashionable amongst some, however it is a mark of your expertise and, more importantly, it will help those who follow when working on the code. The doctype declares in which version of html the page is written – please stick to it.

CSS

Again, make it validate – otherwise it won’t work as it’s a little less forgiving than html. Structure your css so that it can be read and worked on easily. Consider using SMACSS – Jonathan Snooks Scalable and Modular Architecture.

If you’re using a framework make sure you document the version and date for updates in the future.

JavaScript

Javascript is insanely great but it is actually voodoo. (Well to me it is.) Think through your use of it; don’t rely too heavily on Javascript and always provide alternatives for those people and apps not using it – that happens more often than you think. I knew of a site in HongKong that used javascript for absolutely everything – even the html was delivered as document.write statements:

document.write("");

It worked of course, but nobody bothered to look under the bonnet to see what was going on and it turned out that updates could be done by only one person – the risk factors were massive.

Code needs good discipline while you are working at it. Naturally it won’t be at the forefront of your thought process (you will be trying to work out how to make some unfathomable responsive layout work properly or troubleshooting some irritating function) but coding to standards will help with that anyway. Whenever you take a breather, make a commit or come to the end of a session, do a quick validation on your code to date – it will save time later when you or the project are short of time and you will feel the sparkles of love from the next person who needs to dive into your code and adjust it.

Site maps

I really can’t stress how important these are throughout a project lifecycle. They are like the site’s blueprints. There are three kinds of site map that I use and each has a function:

- a content map

- a URL map

- an xml sitemap

Content maps

Content maps are a brilliant way for the business to understand the lumps of content and functions on a site and the relationships between them. But a content map should never be used for a site build – convert that content map into a URL map.

URL maps

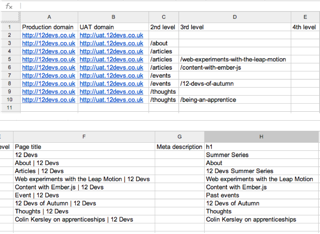

After the content map follow it up with a proper URL map – a simple spread sheet with the following columns:

Production domain | UAT domain | 2nd level | 3rd level | 4th level | page title | meta description | h1

This gives us a list of all the page URLs expected in the site and is useful for developers and content teams building out the site to ensure they get the URLs correct; many content management systems allow variants on URLs and you don’t want duplicate content being indexed in the search engines. You also need a URL naming strategy.

It might get a bit more complex if you have a dynamic site, but the main reason for this map is to ensure that we understand what the search engines will crawl and how. Hence the page title, meta description and h1 columns. There are optimal lengths, minimums and maximums for the full URLs and each component listed. I work with the business client to get these components right – sometimes they want to write them which is great but also a bit scary and other times we have specialist writers do this part.

I recently discovered an example where this wasn’t done – I found one page with nine different URLs for the same piece of content. It’s difficult and costly to go back and redo a big site’s URL architecture and normally when it’s discovered it’s the day before launch – so get it right from the start.

The URLs map is where you do the basic on-site SEO work. Without this no amount of inbound links will help. Get the basics right and the rest comes easily, but going back and fixing this later is costly and causes issues.

Sitemap xml

This is a post-launch caulk. Some build before but I like to get a site live, or at least into UAT, then crawl it with sitemap software to build the sitemap xml, which then gets uploaded and the file link set up in Google webmaster tools.

SEO

Page titles

Need to be unique – if you’re going to use the site name, put it last not first in case the search engines chop it (if it’s too long)!

Meta data

This is what often appears in the search results so is a mini advert for the page. Needs to be well written and about the content on that page, not the whole site.

Keywords

Not significant anymore. Can be helpful to competitors who will easily pick up on the keywords you are trying to optimise for – though you can put fake ones in… I no longer create these.

H1

This is the primary content title. There can in theory be more than one h1, but look at the page’s content structure outline to understand the content hierarchy before you use more than one. Don’t use a h1 to hold a logo…

Webmaster tools

Google search is the biggest referrer for most sites currently – exceptions being in China and a couple of other nations. Not having a Google webmaster account to check the health of your site as Google sees it is a drawback. Google and Bing both have good webmaster tools – there are others that may be more appropriate for localised markets; Baidu is a must if your target market is China. There are several ways to validate a site but the DNS method, cname or txt entry, is the most robust as deleting files or meta tags can cause your site to lose validation in the future.

Webmaster tools will help you get your site indexed quickly and then show you how well your site is performing in searches. Even if you’re not contracted to follow up after a site goes live, as a courtesy you should do a post-launch follow up meeting and at least bring along a short report on how the site has performed so far.

SEO positioning

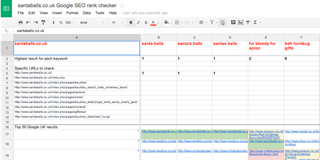

Prior to the launch of a site get an idea of how the intended keywords and long-tail phrases are doing in the search engines. I use a Google Docs spreadsheet to check terms and URLs against Google to understand rankings. Get this in place so you have a base to measure against after launch.

For relaunch sites – same thing, but you are checking how the site currently fares against its peers. Great write-up by Tara Stockford on gov.uk

Canonical links

Should things go badly wrong with your set up and you do end up with multiple URLs for the same content, or worse, if someone starts scraping content from your site for use on another, then you will need to add canonical meta links stating the correct full URL of the page. This means that if the search engine comes across any duplicates then they know your content is the original.

Accessibility

Bothering to make your site WCAG compliant? Might be a good idea. Even if you don’t care about people using screen readers (and if you don’t then you should leave web development and go into Estate Agency or Recruitment) you need to know that the biggest user of accessible techniques is usually the search engine bots and you need them to be able to ‘read’ (index) your site correctly. The one thing I will add is that what is normally good for accessibility is also normally very good for SEO.

Laura Kalbag has written an excellent piece for 24 Ways on reasons you should bother with accessibility.

Other considerations

Google maps

If the site is for a business that has a physical presence, shop office etc, then put it on Google Maps. This is really easy and can be done before site launch and will help with getting the site indexed quickly.

Domain holding page

If a new site, consider deploying a holding page. Set up a small Mailchimp/Campaign Monitor email registration form for a mailing list so that people can get word of when the site launches – and then the Google maps entry will have a destination page.

Spell check!

Triple check your spelling. It’s not just about correct grammar, people don’t deliberately search using misspelled words and Google happily autocorrects any commonly misspelled words used in searches.

Speed testing

Cut down on calls back to the server; two main css links only please (not including css for older browsers where needed), one for screen and one for print. If you have more then consider pre-processing them into one minified file – you all pre-process with sass and less nowadays don’t you…

Image compression

Several tools for this. On the Mac I use Imageoptim and JPEGmini and lately a technique of exporting from photoshop at twice the size required, followed by setting the compression level to 0%. This gives a surprisingly good image at a size comparable to the correct size, sometimes smaller, and this is apparently good for retina screens.

Andy Clark recently discovered some interesting issues with iOS and image sizes above 3 megapixel. Falling foul of iOS’s 3 megapixel resource limit. So be aware of these issues and get them documented.

Number of clicks from home page

How deep is your site? People still like to browse through websites, so effective and shallow navigation is the key.

Social media links

Facebook, Pinterest, Tumblr, Instagram and Twitter, etc. Increasingly important but need to be tied into social media strategies for the site.

Call to action

Telephone numbers? iOS automatically turns that into a link on devices with phone capabilities – what’s your link colour? This can catch you out if you’re not expecting it. Test. Look at dead end user journeys through the site, form completion pages, error pages, etc. as these will need ways to hook the user back into the site or at least take some positive action to keep them engaged with the site.

Analytics

Whatever your choice make sure it’s set up on every page and recording. Set up a test account for this and don’t forget to change the code as part of your launch process.

Analytics base line

Launching a new site means nothing to compare against with the analytics, but with a migrated site you want to ensure that any drop off in traffic is minimised and preferably increased – this is why your SEO work, redirecting URLs etc, is critical. However you must accept with a relaunched site things are different and the traffic will vary so this is why you need to draw a baseline. Start comparing future months traffic to the baseline rather than 12 months previous – until you get to the 13th month after launch. In Google Analytics add annotations for the launch date and any other significant event to help you understand influencing factors.

Link check

Check all links including links to images and files to ensure they are relative or pointing to your local drive – worst case scenario your c: drive!

My method for this is to push the site up into a secure password protected stage/UAT environment – often a subdomain set up for this. Then I run a Screaming Frog crawl on the site and check the results.

Hosting details

Have you got all the details needed to log into the hosting? Test it. Check what the server type is and find out any limitations.

404 page and any other fail pages you need

Add it to the URL list! This should also be thought of as a content page as you will want to enhance the user journey for anyone arriving here – perhaps a simple top level set of site section links to help the user to find what they are looking for, adding a search function if available.

robots.txt

Set up the robots.txt file and include everything or exclude anything you don’t want indexed by search engines.

humans.txt

Like robots.txt but this file is about the humans involved in the site build. Useful info for you if you need to go back to the site and also others who take it over in the future. More at humanstxt.org

Fonts

Check your css fonts, ensure you have the licenses set up if you and the client have decided on the the final typeface (hopefully this will have been finalised at design stage and not a day before launch). Remove any files not required from the fonts folder.

Development code snippets

Ensure you have removed any development hooks. For example, in Hammer for Mac http://hammerformac.com there is a a page refresh comment – very handy for the auto refreshing of pages during the e build out but a pointless comment once live.

CDN

Content Delivery networks are not new but recently the price point has dropped dramatically with the introduction of Cloudflare. Potentially all sites can now use a CDN and reduce the load on their servers, speed up access to their website as well as combatting some threats. Very useful for sites with massive images.

Tools

SEO Crawler

I use possibly the best named software ever, Screaming Frog SEO Spider. A small UK agency took the old and venerable Xenu link checker and gave it some superpowers. I use this to check out a site during UAT and build a snagging list of items to address. These include broken links, page titles, meta description, h1 and h2 headings. You can also quickly see if the site will be easily crawled by the search bots – always good to get traffic to a site and if you don’t want search bots indexing anything this also checks that. For those of you with deeper and fuller pockets have a look at DeepCrawl. All the pages in the URL site map should be here – any that aren’t might be orphans and additional ones need investigating.

For existing sites being upgraded I run a Screaming Frog crawl on the current site and I ignore robots.txt and no-follow meta so that I can pick up as many URLs as possible. After I have mapped those I then check to see what Google has indexed and compare the two. All links from both lists need to be considered for redirection.

Site Quality

Nibbler is a great free tool for running checks on your site but only does a random 5 page quality test and that may be enough for you. For a full site-wide check, switch to its big brother SiteBeam, which has more comprehensive reports. Again this is something you might want to sell onto a customer as part of a a post-launch maintenance package. You can do a monthly report on the quality of the site which can be especially useful if the client is adding content regularly themselves and especially if this site is in your portfolio.

Favicons

Often neglected and there has been a small resurgence of interest this year as new devices are requesting all sorts of icons. I am currently using RealFaviconGenerator.net beta which provides a comprehensive set of icons and an interesting read on what works best.

Legal and compliance

Copyright check

Two parts to this – first do you have all the copyrights in place for any non original content, images, text, video, functions, maps etc. on the site?

Second is the site’s copyright sign off. Legally not needed as copyright exists without the copyright symbol but not everyone knows that so it acts as a deterrent. If you can, build a date that auto changes on January 1 – otherwise you will not get to any New Year’s Eve parties. Ever.

Legals

You will need to ensure there are all the legals required for the site and a lot does depend on the jurisdiction of the company, the content and type of website it is.

- Terms and conditions

- Privacy statement

- EU cookie law compliance – where the target audience is EU residents. (Yes it’s still law – let’s make sure your client doesn’t become the test case.)

Are all these signed off or approved by the company solicitors or lawyers?

Launch Point

Naturally your client will not want to spend months getting all this stuff exactly right – that costs time and money so you will need to prioritise all these caulking items for ‘pre-launch must-haves’ and ‘post-launch phase two we can go live without’. Often the client will not bother with or be interested in a lot of these items as they may not seem immediately important.

Depending on the size of the site you may need to draft in others to make up a launch team, assigning people to individual testing and check roles at launch. If it’s just you make a list – have a launch plan. For migrated sites you will need to assume there are visitors still on the old site when you switch. Think about their user journey at that point. If you have two sets of servers, manage traffic off one and then update that, test, switch traffic to it, then update the other server. If you only have one server then use live analytics and wait till no one is looking and sneak your launch in then. Each situation is different.

Launch link check

I use Screaming Frog to run through the site – this time respecting robots.txt and nofollow meta data.

Launch site check

I run a sweep using Sitebeam and then worry about the things I was unable to complete in the time allocated.

Google maps

Update the maps entry if you have just launched a new site.

Google Webmaster tools

Point Webmaster tools to the new site map.xml file

Site monitor

Set up a monitoring service for the site. Provides up-times and alerts when the site is down. In some systems you can set up user journeys, including log in and form functions, to be tested every few minutes so that you can constantly ensure that your site continues in good health – especially important where you have transactions. Who has had a contact form that broke months ago and it wasn’t noticed?

Analytics

I produce day one, week one and month one analytic reports on the new site’s progress. It helps if there are proper Goals and Key Performance Indicators to measure the site against as well.

Wrap

Boat launched, crack the champagne over the bow and have a celebratory drink! And then get ready to fix all those things you didn’t have time for prior to launch.

These are just a few of the things we have to think about when building out websites these days. Every project and team will be different meaning an adjusted set of items to consider. Build your own checklists and adapt them to your workflow, but most importantly, when you’re involved in a project make sure all these things are considered and listed at the project kick off and not the day after launch!